In my previous

article on vacuum tubes in Issue 103, I talked about linearity. One of our readers requested a more thorough explanation of how linearity relates to audio and music.

There are a few different common uses of the term “linearity” in relation to audio electronics. One refers to the frequency response of an audio circuit.

A “linear frequency response” refers to the ability of the circuit to produce an equal output level at all frequencies within a specified bandwidth, for an equal input level.

The audio range of frequencies is commonly thought of as starting at 20 Hz and ending at 20 kHz, which is the average range of frequencies that can be perceived as sound by a healthy human when presented as pure sinusoidal (sine wave) waveforms, heard through loudspeakers or headphones.

This is far from the whole story though, since live music consists of fundamental frequencies as low as 16 Hz (which can be produced by organ pipes) and harmonics extending up to or over 100 kHz (certain percussive instruments). Furthermore, sound in nature is never just pure sinusoidal waveforms, but complex waves consisting of various frequencies blended together.

While we cannot “hear” 16 Hz, we can perceive its presence or absence with our other senses. Also, the ability or inability of audio equipment to linearly reproduce 16 Hz will affect the phase response above 20 Hz in the range that we

do hear!

The phase response can be thought of as the relative stage of development between the different regularly occurring oscillating phenomena, representing the frequency components of a complex sound. There are mathematical relationships (which can get rather complex) between the frequency and phase response of an electronic circuit. A key point to consider is that frequency response errors

outside the 20 Hz – 20 kHz range can cause phase response errors

within the 20 Hz-20 kHz range, at low and high frequencies alike.

Changing the relative phase between the different frequency components of a complex wave actually changes the wave shape, as mathematically described by the Fourier transform.

But is this actually audible? Dr. Milind Kunchur of the University of South Carolina conducted several experiments, attempting to define the temporal resolution of the human auditory system. The groundbreaking results were published in peer-reviewed academic journals and even proposed a neurophysiological mechanism model that could explain the findings. Our hearing is much more sensitive to time domain and phase response errors than to frequency domain errors (frequency response). The temporal resolution found through Dr. Kunchur’s research is in the order of 4.7 µs, which would seem to imply that we should be able to hear frequencies much higher than 20 kHz. It is noteworthy that our temporal resolution does not appear to degrade as much with age, whereas our hearing becomes progressively less sensitive to high frequencies as we age.

We have essentially just stumbled upon a second potential use of the term “linearity.” This would be phase linearity.

Both frequency and phase response can easily be measured. The frequency response of an audio component or loudspeaker is often proudly displayed in product specification sheets – while the phase response is usually absent. Moreover, the frequency response specs are usually stated in terms of 20 Hz – 20 kHz +/-1 dB, which tells us absolutely nothing about the product’s actual sound.

|

|

This is not to say that it is not useful to measure the frequency response. On the contrary, I believe we would benefit from more thorough measurements, conducted over a much wider range, such as measuring both frequency and phase response from 10 Hz – 100 kHz, and presenting the data as (graphical) plots rather than as just numbers.

(While measuring the frequency and phase response of a high performance disk-cutting amplifier I designed some years ago

Journal of the Audio Engineering Society>, even the 10 Hz – 100 kHz plots were just straight lines! A 20 Hz – 20 kHz plot would have been less informative or indicative of this amplifier’s performance.)

The benefit of an amplifier having such a wide bandwidth is that it can reproduce a very accurate 1 kHz square wave, which is a good measure of transient performance. What does this mean in terms of reproducing music? Phase response errors alter the shape of a square wave passing through the circuit, and in much the same way, the sound of fast transients in music such as percussive sounds and vocal consonants.

The effect of such errors is accumulative, which means that if, say, we have one circuit in a component and that circuit has phase response errors just at the threshold of audibility, we might not notice. But what if we have two such circuits in series, such as a preamplifier and a power amplifier that both have phase response errors? Or, if there were phase response errors in the equipment used in the recording studio, and then the recording is listened to on a home system that also has phase response issues? In such cases the total error would be well within the region of audibility.

So what defines the frequency and phase response of a circuit?

Let us take a triode tube circuit as an example. The tube alone cannot do much. In order to amplify audio, it needs to be in a basic circuit with a few additional components around it, plus a power supply. The triode tube’s internal control grid – the part that controls the flow of electrons from the cathode to the anode and modulates the musical signal – is biased at a particular operating point by defining the potential of the grid with respect to the cathode. (see my article ion Iissue 103 for an explanation of vacuum tube operation.)

The cathode is heated and the anode is at an elevated (electrical) potential, so current flows between anode and cathode. The current flow is controlled by the grid. A voltage develops across the load connected to the tube, due to the current flowing through it (Ohm’s Law: V = IR where V is voltage, I is current and R is resistance). As such, connecting a DC voltage to the biased grid would change its bias point and hence the current flow, also changing the voltage across the load. This is a basic DC amplifier. If we modulate the grid potential by connecting an AC signal to it, then the current through the tube and voltage across the load will vary according to the frequency of the AC signal to the grid. This is a typical audio amplifier. As we can see, the triode itself can amplify from 0 Hz (DC) upwards. But can it go on amplifying regardless of frequency?

Excerpt from the original Western Electric 300B data sheet.>

Past a certain frequency, the amplification becomes progressively less. This is primarily due to the fact that the grid, anode and cathode, being in close proximity to each other, exhibit capacitance. This capacitance, together with the source resistance of the circuit that drives the grid, form a low-pass filter, which acts to suppress frequencies above the (filter’s) cutoff point. Many vacuum tubes were designed to be able to also act as radio frequency amplifiers, so it is common for a triode to be able to linearly (equally) amplify frequencies from 0 Hz to several megahertz, making it eminently suitable as a near-perfect amplification device for the audio range.

Sadly, this is not the whole picture. The output is superimposed on the DC supply voltage and in most real life circuits, we must find a way to remove this DC offset and leave only the AC signal at the output. A simple way of accomplishing this is by means of a capacitor, to block the DC and pass the AC. This would create a high-pass filter, which together with the input resistance of the following circuit, would limit the low frequency response. The cut-off frequency, below which the response will drop off, is defined by the value of capacitance we choose. Choosing a larger capacitor will improve to low frequency response, but would significantly increase the cost. Instead of a capacitor, we could use a transformer. Similarly, a transformer that would allow a better low-frequency response would result in a much higher cost. These are just two examples of the many similar decisions which must be made at the design stage. It is always a trade-off of performance versus cost.

The performance can almost always be improved at a much higher cost, but past a certain point, the equipment is no longer marketable. Mass manufacturing relies on being able to sell a certain number of units, which necessitates compromises in performance to meet a certain price point. This is why there is still a market for custom audio equipment, for situations where certain performance requirements must be met regardless of cost, which would not necessarily be appealing to a larger audience and therefore enable mass manufacturing.

Even with the basic design decisions on paper, the practical implementation of a circuit is a whole new challenge. Tubes of the same type are not all identical in electrical characteristics, so in many cases they need to be tested, selected and matched for a particular circuit, to perform as intended. Inevitably, this leads to rejected tubes, increasing the manufacturing cost.

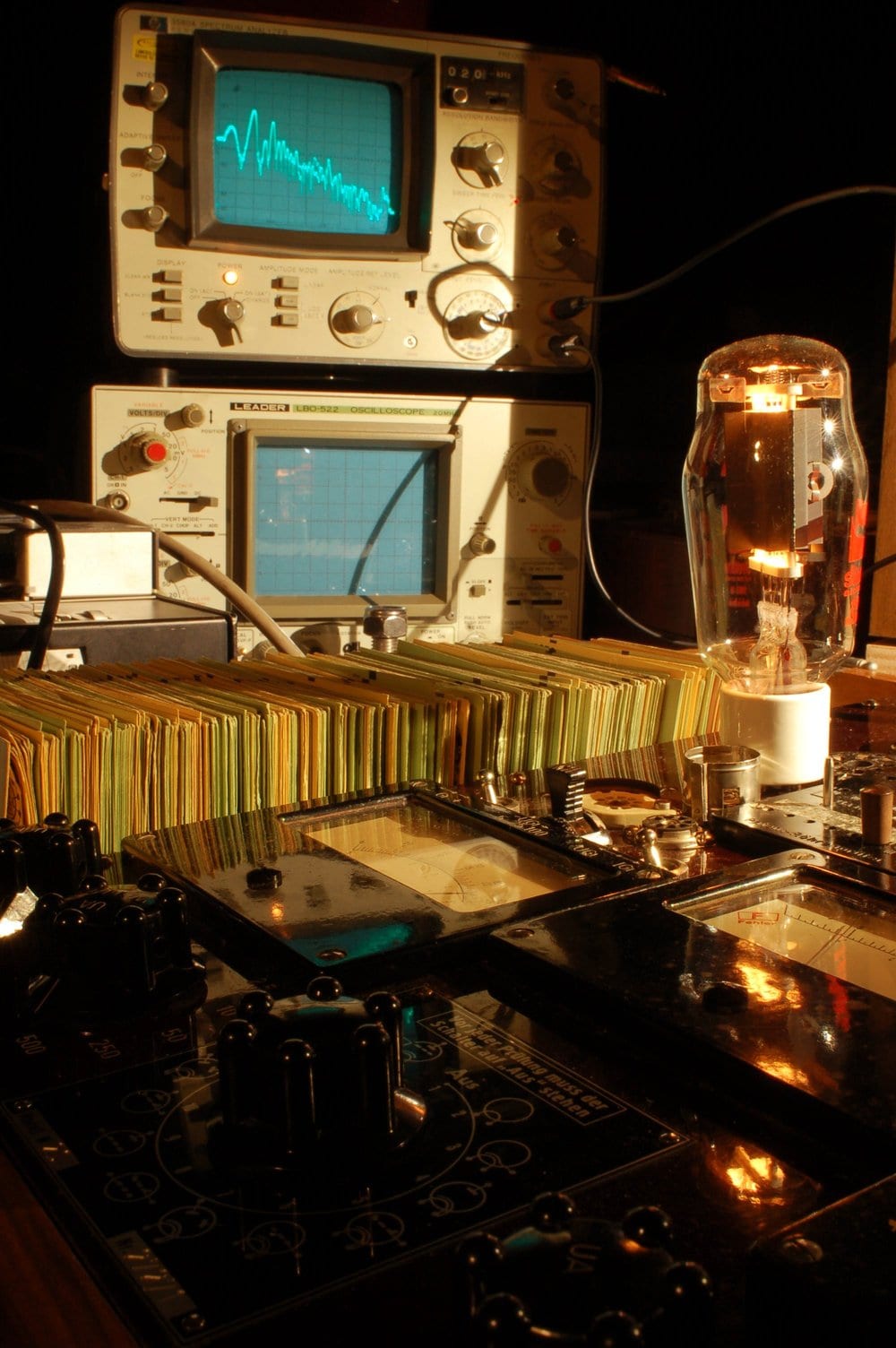

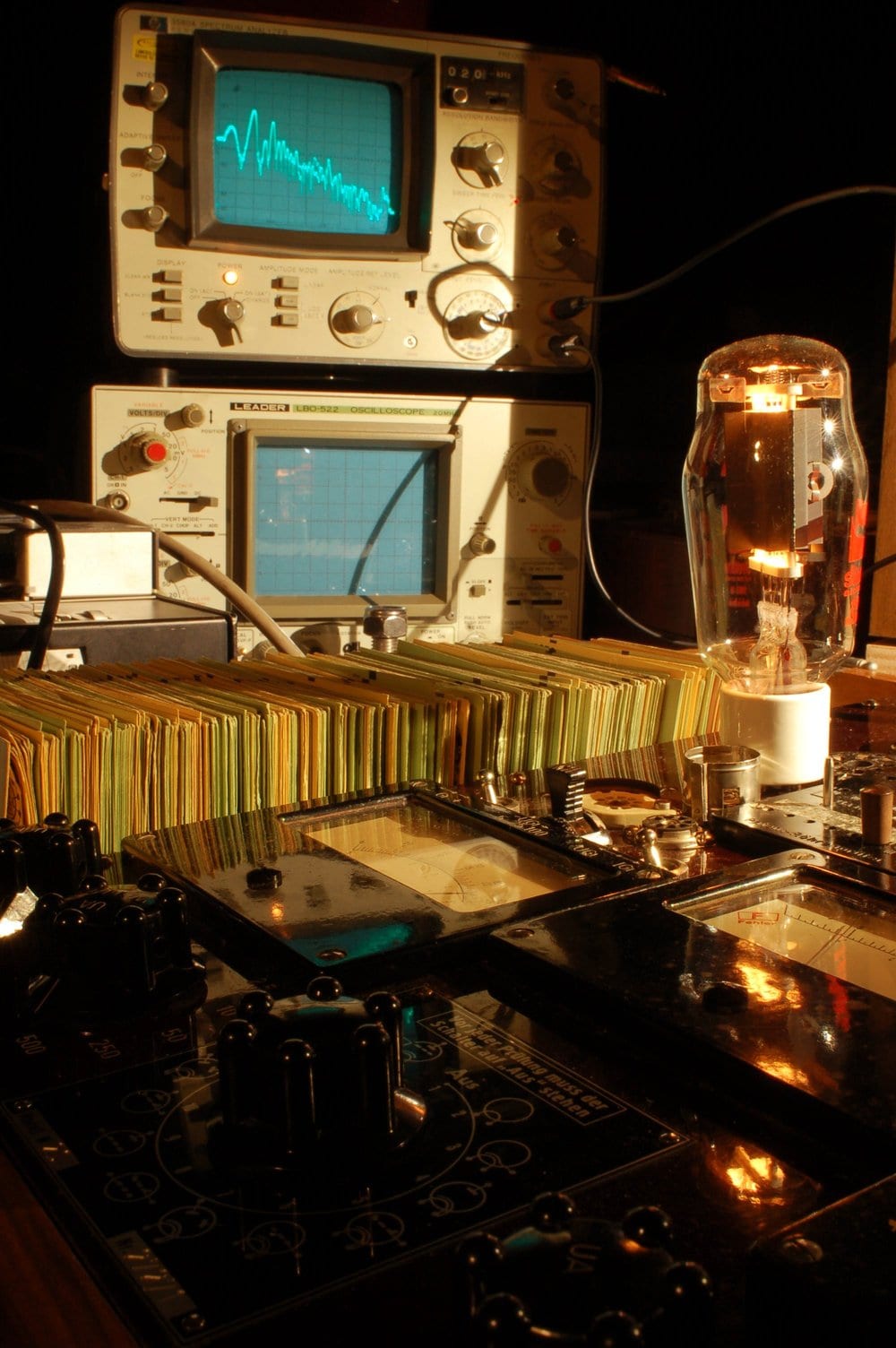

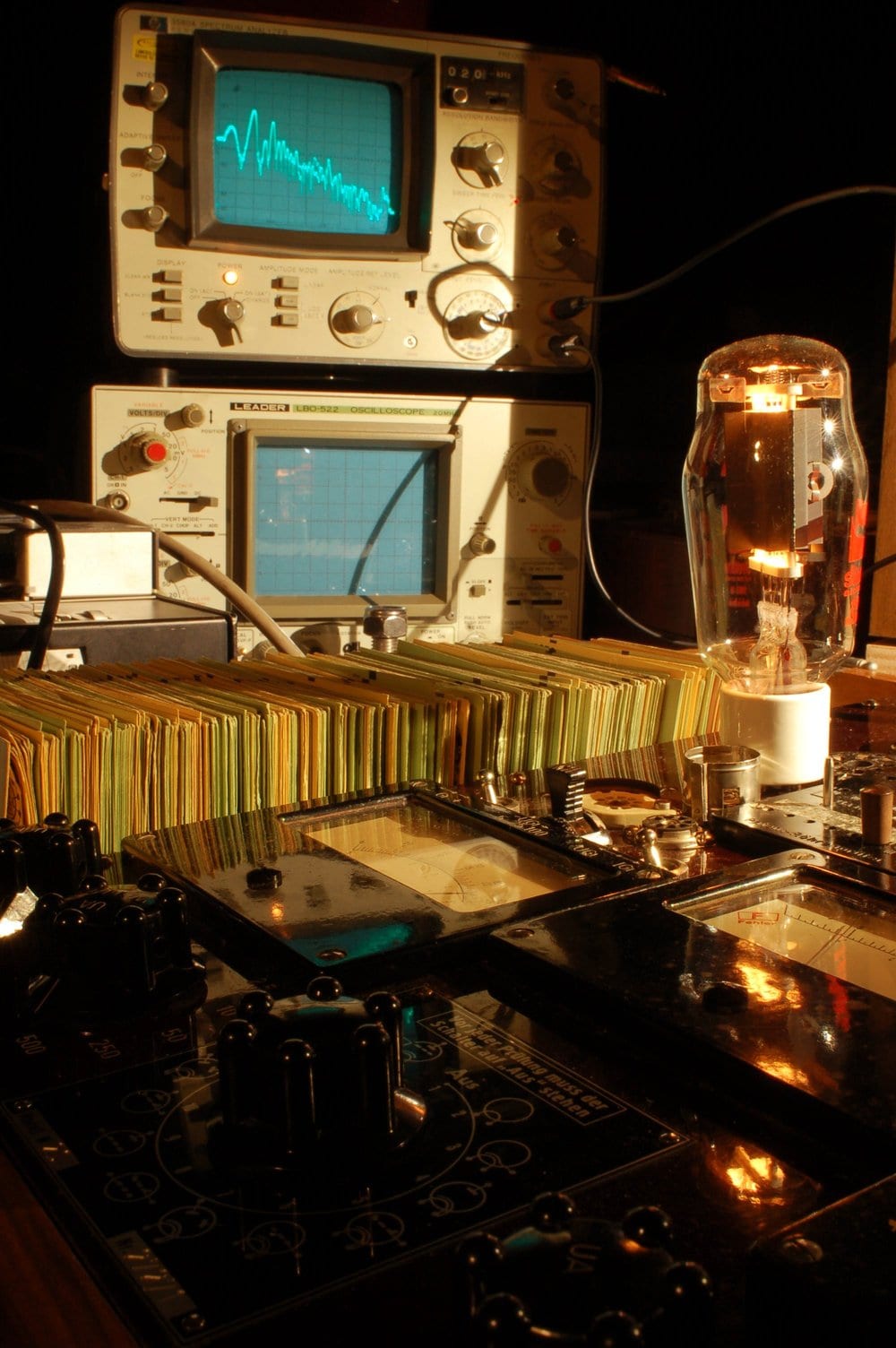

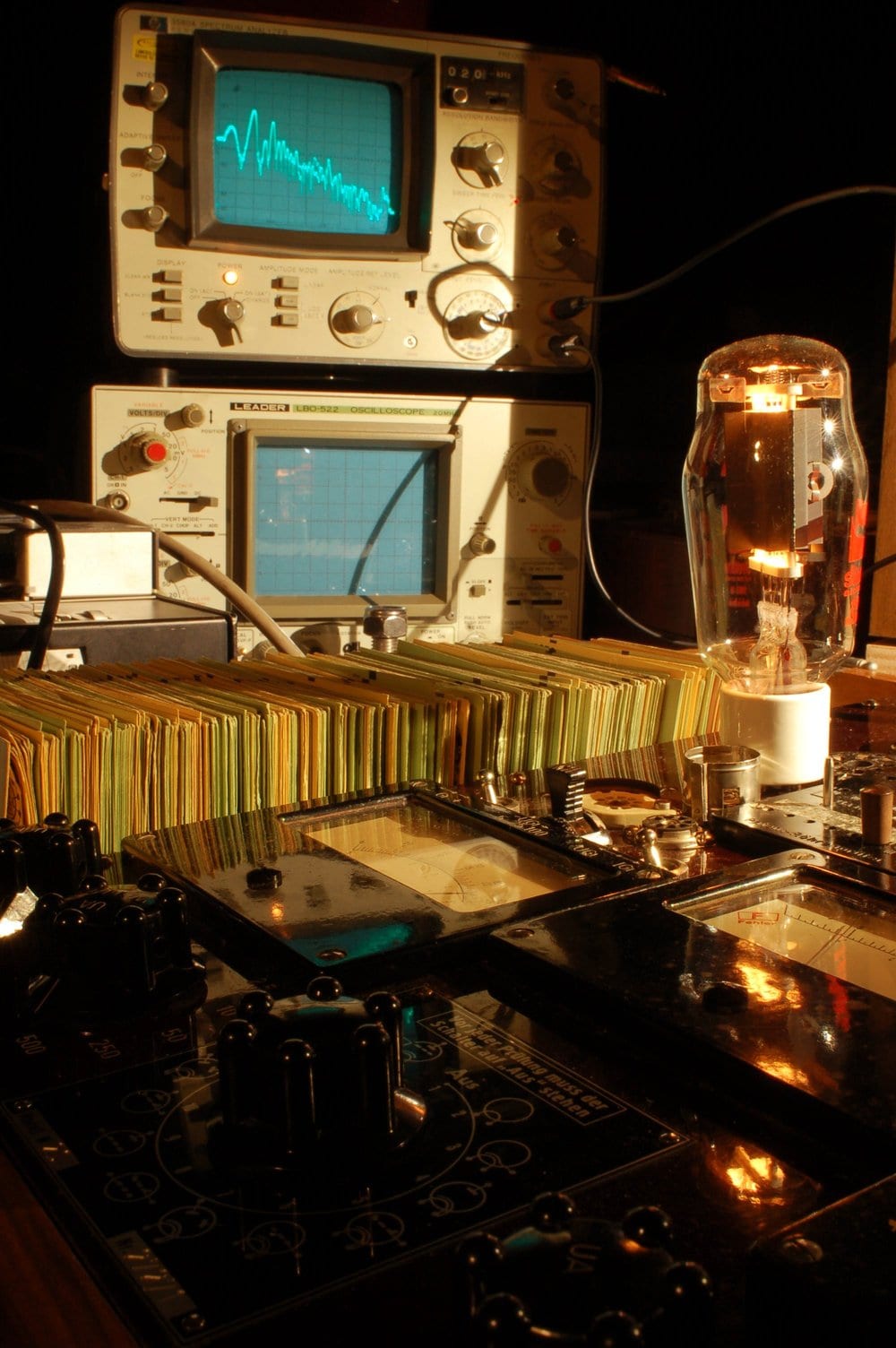

A transmitting triode tube in the lab, undergoing testing.

Photo courtesy of Agnew Analog Reference Instruments.

Then comes component selection.

A real-life resistor is never just a resistor. Its resistance varies with temperature. It exhibits inductance. Its placement in a circuit in close proximity to wiring, circuit board traces, or other components, will result in capacitance! An inductor has resistance and self-capacitance. A capacitor can also exhibit inductance and resistance effects. Even just a straight piece of wire has measurable resistance, inductance and even capacitance to nearby wires!

All these effects conspire to ruin the near-perfect audio performance of the triode itself in any practical circuit. The designer must go to great lengths to calculate these effects and ensure that they are not allowed to impair the performance of the circuit. As always, the limit is usually economically imposed, to keep the product marketable.

Better resistors and capacitors do exist and even better wires exist. There is nothing overly esoteric about this; these effects are all measurable if one knows what to measure. In my experience, if I can hear a difference between two components, I can most probably measure the difference and scientifically describe the underlying mechanism. I do rely a lot on measurements, but at the same time, I encourage consumers who do not have access to measurement instruments or an engineering background to trust their ears. If you hear a difference, it is usually because there is a difference!

So how are frequency and phase response related to music?

Each note has a fundamental frequency and each musical instrument can cover a certain range of notes. There is, however, a difference in sound between two different violins playing the same note. If identically tuned, the fundamental of the note would be the same frequency for both. The characteristic sound of each violin comes from the relative amplitude and timing of the harmonics it generates. And even if the frequencies of the harmonics were all identical, differences in the phase or amplitude would produce a different wave shape for each instrument, which would excite our auditory mechanisms differently. A accurate frequency and phase response in an audio component or loudspeaker is therefore essential to maintain and reproduce such subtle and not-so-subtle differences, as well as maintain the contribution of the acoustics in the performance space as captured in a recording.

As an even more extreme example: if the frequency response of a piece of equipment or loudspeaker drops at low frequencies, within the range of an instrument such as a bass or piano, a passage consisting of notes of decreasing pitch would appear as if the performer is playing each successive note more softly, even when they were originally performed at equal loudness. It could even be that the lowest notes of the bottom octave of the range of the instrument would disappear completely, effectively altering the composition and performance!

In conclusion, in the frequency and phase domains, linearity refers to the ability of a circuit to maintain equal amplitude, without phase errors, at all frequencies within a specific range.

In the next episode, we will discuss dynamic linearity.

Conventional current is said to flow from anode to cathode, which is opposite to actual electron flow, which is from cathode to anode…! This is just to confuse the bejesus out of outsiders and keep our trade exclusive, in pretty much the same way that medical terminology is still expressed by doctors in a combination of ancient Greek and Latin, both obsolete languages which are not even related to each other and have not been in use anywhere in the world for several centuries now.

Excerpt from the original Western Electric 300B data sheet.>

Excerpt from the original Western Electric 300B data sheet.> A transmitting triode tube in the lab, undergoing testing. Photo courtesy of Agnew Analog Reference Instruments.

A transmitting triode tube in the lab, undergoing testing. Photo courtesy of Agnew Analog Reference Instruments.

Excerpt from the original Western Electric 300B data sheet.>

Excerpt from the original Western Electric 300B data sheet.> A transmitting triode tube in the lab, undergoing testing. Photo courtesy of Agnew Analog Reference Instruments.

A transmitting triode tube in the lab, undergoing testing. Photo courtesy of Agnew Analog Reference Instruments.

Excerpt from the original Western Electric 300B data sheet.>

Excerpt from the original Western Electric 300B data sheet.> A transmitting triode tube in the lab, undergoing testing. Photo courtesy of Agnew Analog Reference Instruments.

A transmitting triode tube in the lab, undergoing testing. Photo courtesy of Agnew Analog Reference Instruments.

0 comments